Over 60% of AI chatbot responses are wrong, study finds

A new study by Columbia Journalism Review's Tow Center for Digital Journalism reveals that popular AI search tools deliver incorrect or misleading information more than 60% of the time, often failing to properly credit original news sources. The findings raise concerns about how these tools undermine trust in journalism and deprive publishers of traffic and revenue.

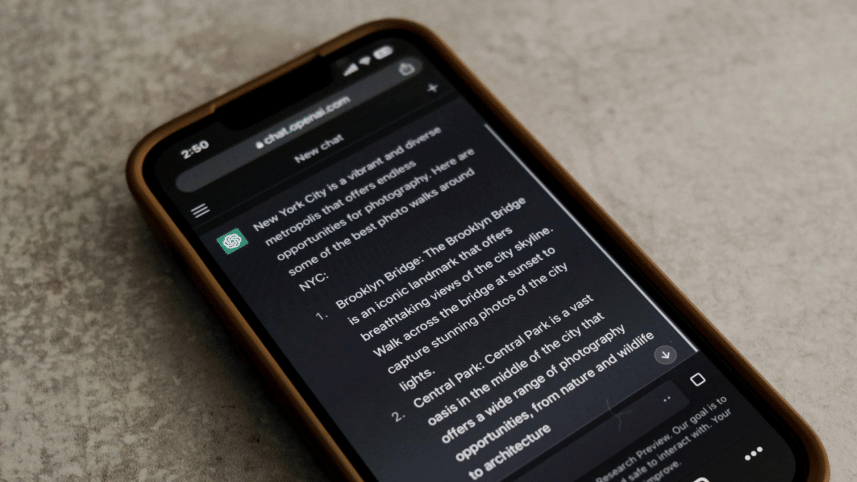

Researchers tested eight generative AI chatbots—including ChatGPT, Perplexity, Gemini, and Grok—by asking them to identify the source of 200 excerpts from recent news articles. The results were alarming: over 60% of responses were incorrect, with chatbots frequently inventing headlines, not attributing articles, or citing unauthorised copies of content. Even when chatbots named the right publisher, they often linked to broken URLs, syndicated versions, or unrelated pages.

Confident errors

The chatbots rarely admitted uncertainty, instead presenting wrong answers with unwarranted confidence. For example, ChatGPT provided incorrect information in 134 out of 200 queries but only expressed doubt 15 times. Premium models like Perplexity Pro ($20/month) and Grok 3 ($40/month) performed worse than free versions, offering more "definitively wrong" answers despite their higher cost.

The tools also frequently bypassed publishers' attempts to block their access. Five chatbots ignored the Robot Exclusion Protocol, a widely accepted standard allowing websites to restrict crawlers. Perplexity, for instance, correctly cited articles from National Geographic despite the publisher blocking its crawler. Similarly, ChatGPT cited paywalled USA Today content via unauthorised reposts on Yahoo News.

Syndication and fabrication issues

Many chatbots directed users to syndicated articles on platforms like AOL or Yahoo instead of original sources, even when publishers had licensing deals with AI companies. Perplexity Pro cited syndicated versions of Texas Tribune articles despite their partnership, depriving the outlet of proper attribution. Meanwhile, Grok 3 and Gemini often invented URLs: 154 of Grok 3's 200 responses linked to error pages.

While OpenAI and Perplexity have signed content deals with publishers like The Guardian and Time, these agreements didn't ensure accuracy. ChatGPT correctly identified just one of ten excerpts from the San Francisco Chronicle, which has a partnership with OpenAI. Time's COO, Mark Howard, noted that AI companies never promised "100% accuracy" in citations, despite licensing intent, states the research.

Implications for publishers

The study highlights a growing crisis for news organisations. AI tools increasingly replace traditional search engines, with nearly 25% of Americans using them for information. But unlike Google, which drives traffic to websites, chatbots summarise content without linking back—starving publishers of ad revenue. Danielle Coffey of the News Media Alliance warned that without control over crawlers, publishers cannot "monetise valuable content or pay journalists."

Misattributions also damage publishers' reputations. As BBC News noted in a recent report, chatbots citing trusted brands like the BBC lend false credibility to incorrect answers, risking public trust in media.

The road ahead

According to the research team, when contacted, OpenAI and Microsoft defended their practices but didn't address specific findings. OpenAI stated it "respects publisher preferences" and helps users "discover quality content", while Microsoft claimed it honours 'robots.txt'.

Researchers stress that flawed citation practices are systemic, not isolated to one tool. They urge AI companies to improve transparency, accuracy, and respect for publisher rights. Howard remains optimistic, noting AI tools are "the worst they'll ever be today", but warns users against trusting free products blindly.

For now, the study signifies a stark reality: as AI reshapes how people access information, news publishers face an uphill battle to protect their content—and their credibility.

The full research can be found here.

For all latest news, follow The Daily Star's Google News channel.

For all latest news, follow The Daily Star's Google News channel.

Comments